I keep seeing stories about AI companies hiring philosophers, theologians, and sociologists to layer some sort of morality on top of their software. That’s not going to work.

You can’t create a moral “intelligence” by pasting rules, frameworks, and oversight boards on top of it. I’ll explain why below, but here’s the practical takeaway.

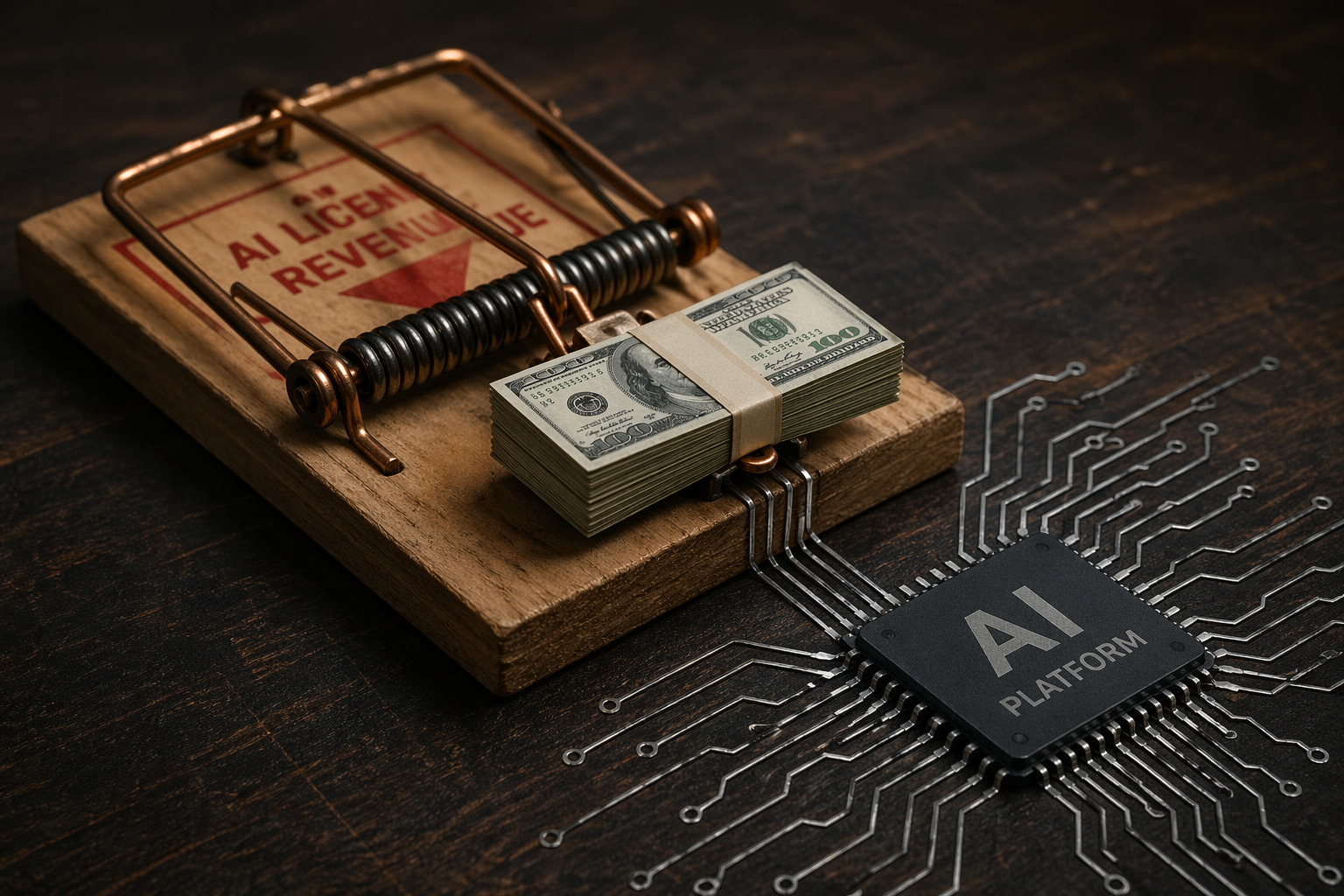

Don’t believe the sales pitch about moral AI. Whatever “morality” AI might develop, it won’t be the same as human morality, and you need to plan to provide human oversight.

The Problem

Early attempts at imposing moral rules on AI resulted in comical failures. (Recall the way Gemini made a mockery of itself with ham-fisted rules about image generation.)

Fortunately, AI companies have grown past that and understand that morality isn’t a simple ruleset. They’ve largely moved past content filters and “refusal engines.” Current approaches — reinforcement learning from human feedback, “constitutional AI,” and value learning — attempt something more ambitious. They train systems to absorb human values rather than follow explicit rules. The hope is that they’ll produce something like genuine moral reasoning from the inside out.

It’s a serious effort, but I don’t think it will work for a very simple reason.

Human morality is not a list of rules pasted on top of an otherwise complete intelligence. It is not a moderation layer or a content filter. It’s also not a linguistic structure — not a set of concepts that can be absorbed by learning how words relate to other words.

Human morality emerged along with intelligence. The precursors to and foundations of human morality developed with life itself. For example, humans show a preference for their own offspring over the offspring of others. That kind of morality literally becomes a part of the body and guides the way it interacts with the world. (See John Vervaeke’s work on relevance realization. Here’s a short introduction.)

What we call “morality” (or just “values”) is woven into our perception of the world. It’s part of emotion, memory, kinship, shame, trust, reciprocity, fear, … everything we experience as a human. The important point is that these don’t start off as intellectual concepts. They start as experiences.

A child feels empathy before it knows the word. It feels the sting of unfairness before it can articulate fair rules. Betrayal hurts before “betrayal” means anything at all.

This means that moral concepts aren’t free-floating linguistic structures or intellectual ideas. They’re grounded in physical and emotional experience that precedes language and conscious understanding entirely. The word “shame” gets its meaning from the feeling of shame, not from its relationships to other words.

A child doesn’t become an intelligent manager of words and symbols and then receive a moral software upgrade. Moral formation begins almost immediately. Before children can understand ethics, they already know the building blocks on which their ethics will stand — that approval matters, betrayal hurts, promises bind, unfairness stings, and trust is valuable.

Humans live ethics before we know what it is.

Why Training on Moral Language Isn’t the Same Thing

Modern AI systems are essentially giant predictive engines trained to generate plausible outputs from massive amounts of data. That training data does include enormous quantities of human moral reasoning, literature, philosophy, and emotional expression. AI companies believe this training process causes systems to internalize values rather than merely simulate them.

But this fundamentally misunderstands what moral concepts are and how they get their meaning.

When an AI system processes the word “shame,” it learns how that word behaves relative to thousands of other words across millions of contexts. What it doesn’t have — and can’t get from the text — is the experience that gives “shame” its meaning in the first place.

The concept in the AI is a shape without substance. It has the structure of moral language without the emotional experience that makes moral language have meaning.

This isn’t an engineering or programming problem to solve. It’s a consequence of what these systems are.

They’re machines.

Even Star Trek Got It Wrong

As a long-time Star Trek fan, I have to stop here and admit that the franchise got this wrong.

The writers made this “layering-on error” when they came up with the idea that Data could have an “emotion chip.”

Data is a touching character because he wants to feel. He is morally earnest, endlessly curious, and deeply loyal — and the show treats his lack of emotion as a kind of incompleteness.

When he finally receives the emotion chip in Generations, it doesn’t complete him. It destabilizes him.

The writers seem to have intuited something important: you can’t bolt feeling onto an otherwise complete intelligence and get a smoothly functioning moral agent.

Humans don’t have an emotion chip. Emotion isn’t an addition to intelligence. It’s woven into it from the beginning.

Neuroscience and Emotions

Neuroscience confirms what Star Trek only half understood.

Antonio Damasio’s research on patients with damage to the region involved in emotional processing showed that losing emotional response doesn’t turn them into some Spock-like logical genius. Rather, it cripples moral decision-making.

The most famous historical case is Phineas Gage, the 19th-century railroad worker who survived after a rod passed through his frontal lobe. He emerged with his intellect intact but his personality and moral judgment drastically changed.

Emotion isn’t a module you can add or remove. It’s the substrate on which moral reasoning runs.

Possible Solutions

I don’t want to be dogmatic and say that AI will never have an effective moral code. There are experimental methods that try to mimic the mechanics of human morality, with pain/reward circuits, embodied robotics, and other training methods.

Those approaches have the advantage of trying to confront the real problem, but I don’t think they solve it.

First-person experience and feelings seem key to true morality, and it’s hard to see how an AI system will ever have that.

And how will we know if it does?

The Hard Conclusion

As things stand, there isn’t a clear path to autonomous “moral AI” in the foreseeable future.

Human morality vs. AI morality is the difference between knowing the definition of shame and having been ashamed.

No amount of sophisticated training on human-generated text bridges that gap, because the text was never the source of the morality in the first place.

Keep Watch

For all these reasons, human oversight will remain necessary, and it can’t be superficial oversight based on rules from the HR department.

Children like to question rules, and they often feel that if an adult can’t give a rational explanation for a rule, the rule can be discarded.

A parent has to have the moral sense and courage to say, “I can’t explain why right now, but this is the rule and you will obey it.”

Humans have to have the same moral courage with AI. Even when its arguments seem logical, human intuition has to prevail.