Social media chatter about AI tends towards two extremes.

- It’s just predicting the next word

- It’s imitating human thought

Both extremes are wrong, and I can show you that from your own experience.

The human vs. the digital chess player

I’ve played chess a bit, and I’ve noticed that when I choose my next move I do not have a database of millions of chess games in my head to guide my next choice. I can’t look at a board and think, “This is the same configuration as these other 1,259 games, and the next move most correlated with winning is queen to knight’s seven.”

That is how a computer does it. The way it considers its next move bears almost no relation to the way a human does.

The same is true with text. The way an LLM approaches language is radically different from the way a human does.

Patterns within patterns

Now consider the opposite claim — that AI is merely predicting the next word. People often compare AI to the autocomplete function in a search bar.

That’s a bad comparison.

When you interact with AI, the responses clearly involve something much larger.

To see what I mean, think about the typical structure of an AI response. You ask a question, and the answer usually looks something like this:

- A “polite framing” (aka flattery)

- A structured answer to the question

- Additional context or clarification

- A suggestion of next steps or related ideas

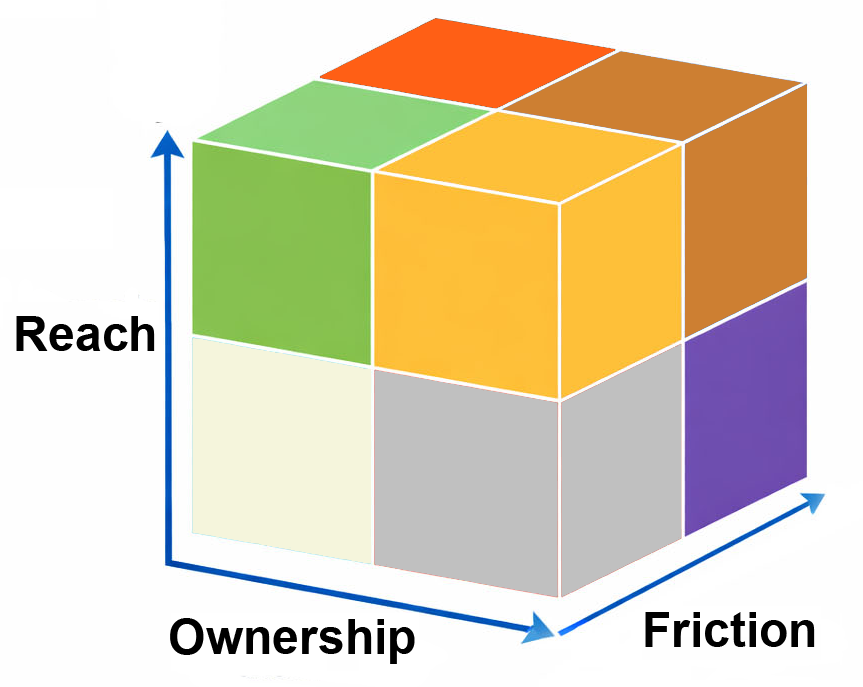

The choice of the statistically most likely next word – which AI absolutely does – is nested inside a larger context.

Here’s a practical example.

What AI said about my writing

I recently took a chapter from one of my books and rewrote it by hand. I asked ChatGPT to compare the two versions and tell me what was different.

Here’s a sample of the analysis.

- slightly academic

- explanatory

- careful

Handwritten voice:

- conversational

- argumentative

- witty

- impatient

That’s so far beyond “predicting the next word” that …. Honestly, if you think that’s all that AI is doing, you simply haven’t used AI that much. Or you weren’t paying attention.

The analysis AI did on my material was algorithms inside algorithms calling subroutines. When it came time to type an answer, sure, there was some statistical “best next word” going on, but that fails to capture the essence of it in the same way that “he was just pressing the next key” explains what a human author is doing.

Why this matters

Misunderstanding how AI works leads people to make two opposite mistakes.

The first group treats AI like a mind. They attribute intention, reasoning, and understanding to something that’s fundamentally a statistical system. This group might mistakenly rely on AI for factual information. Big mistake.

The second group dismisses AI as a trivial autocomplete machine. They assume it can’t produce sophisticated output because it’s “just predicting the next word.” This group underestimates the remarkable power of AI systems and won’t use them to their full capacity. That’s a lost opportunity.

Avoid both extremes. Yes, AI is “predicting the next word,” but it’s not just doing that. There are layers and layers of complexity behind the statistical “next word” decisions.

The resulting output is so compelling you can easily be tempted to mistake it for thought. But AI makes mistakes a 4-year-old wouldn’t because it’s just doing math. It has the linguistic facility of the 20 most intelligent people you know, but it might stumble over the difference between uphill and downhill.

AI is “just math,” not a mind. But don’t underestimate what math can do.